TBD

Additional Resources

Evaluation Script

- Official evaluation script: TBD

Linguistic Resources

- CUTEXT. See it on GitHub.

Medical term extraction tool.

It can be used to extract relevant medical terms from tweets. - SPACCC POS Tagger. See it on GitHub.

Part Of Speech Tagger for Spanish medical domain corpus.

It can be used as a component of your system. - NegEx-MES. See it on Zenodo and on GitHub.

A system for negation detection in Spanish clinical texts based on NegEx algorithm.

It can be used as a component of your system. - AbreMES-X. See it on Zenodo.

Software used to generate the Spanish Medical Abbreviation DataBase. - AbreMES-DB. See it on Zenodo.

Spanish Medical Abbreviation DataBase.

It can be used to fine-tune your system. - MeSpEn Glossaries. See it on Zenodo.

Repository of bilingual medical glossaries made by professional translators.

It can be used to fine-tune your system. - Occupations gazetteer. See it on Zenodo.

A gazetter of occupations extracted from a set of terminologies (DeCS, ESCO, SnomedCT and WordNet) and Stanford CoreNLP.

Word embeddings

- FastText Spanish medical embeddings. See them on Zenodo.

Word and subword embeddings trained for medical Spanish domain.

It can be used as a component of your system. - FastText Spanish Twitter embeddings. See them on Zenodo.

Word and subword embeddings trained with Spanish Twitter data related to COVID-19.

It can be used as a component of your system.

Baseline

More resources TBD

Evaluation

The evaluation will be done at CodaLab. SocialDISNER submissions will be ranked by Precision, Recall, and F1-score for each ENFERMEDAD [disease] mention extracted, where the spans overlap entirely (F1-score is the primary metric). A correct prediction must have the same beginning and ending offsets as the Gold Standard annotation

Evaluation dates

July 11, 2022, midnight – July 15, 2022, UTC

Submission format

Participating teams will have to generate a *.tsv file with the annotations detected for each of the test documents according to the following column structure:

- tweets_id: Name of the file from which the disease mention has been extracted.

- begin: Character number where the detected mentions start.

- end: Character number where the detected mention ends.

- type: In this case it will always be ENFERMEDAD

- extraction: Mention extracted from text

tweets_id begin end type extraction

1357198223706894339 12 19 ENFERMEDAD alergia

1357198223706894339 21 26 ENFERMEDAD covid

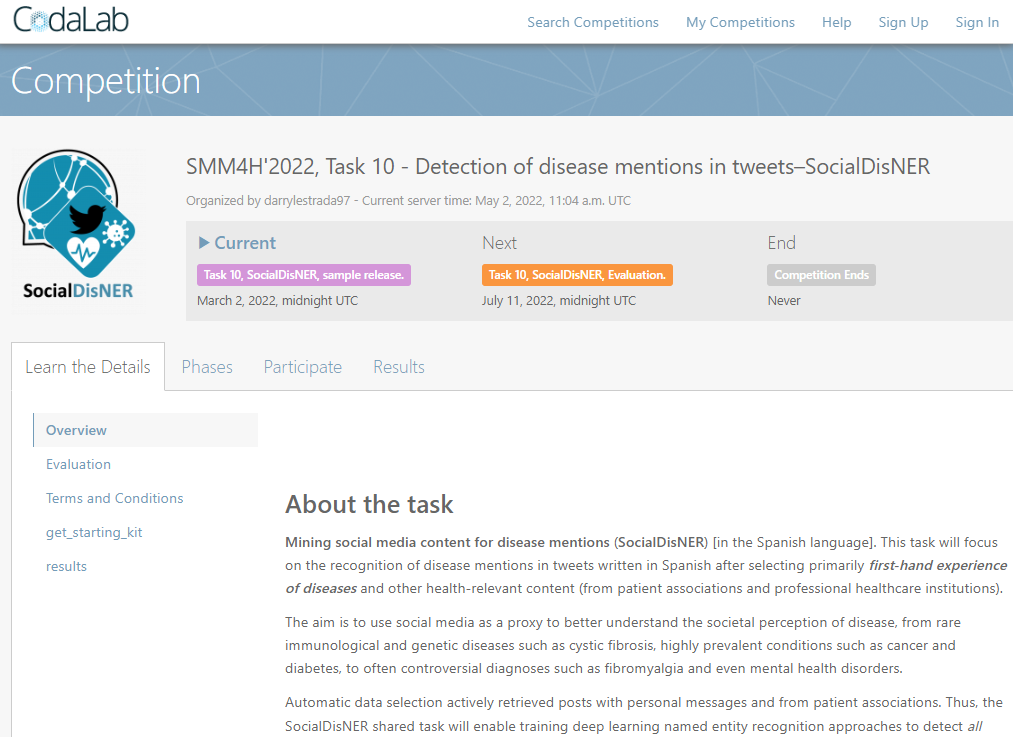

CodaLab

Predictions for each subtask should be contained in a single .tsv (tab-separated values) file. This file (and only this file) should be compressed into a .zip file. Please upload this zip file as a submission. For the evaluation phase which will start on the 11th of July, you are allowed to add the validation set to the training set for training purposes.

Codalab: https://codalab.lisn.upsaclay.fr/competitions/3531

- Register and wait for approval

- To make submissions : Participate -> Submit/View Results -> Click on Task -> Click Submit -> Select File

Refresh your submission. It goes from Submitted -> Running -> Finished. Scores should be available in the files. You can choose to submit your best scores to the Leaderboard. - To view results : Results -> Click on Task -> View results in table

You will be allowed to make unlimited submissions during the validation stage. During the evaluation stage only 2 submissions will be allowed.

Registration

To participate in the task, please be sure to complete the following steps:

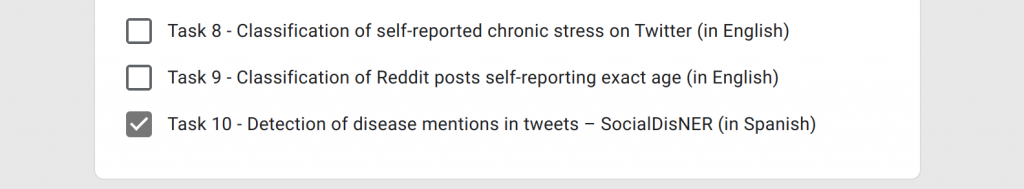

- Register on Google Form. Please, choose a team name you remember since we will use it throughout the whole competition and select the Task 10 option:

- Login/Register in our CodaLab task in order to upload your predictions:

Important note: Student registrants are required to provide the name and email address of a faculty team member who has agreed to serve as their advisor/mentor for developing their system and writing their system description (see below). By registering for a task, participants agree to run their system on the test data and upload at least one set of predictions to CodaLab. Teams may upload up to three sets of predictions per task. By receiving access to the annotated tweets, participants agree to Twitter’s Terms of Service and may not redistribute any portion of the data.

Results

TBA

Annotation Guidelines

The SocialDisNER corpus of the SMM4H 2022 track was manually annotated by medical experts following the SMM4H-SocialDisNER guidelines. These guidelines were adapted from previous versions used to annotate EHRs and medical literature (clinical case reports) and contain rules for annotating mentions of diseases in health-related tweets in Spanish. Additionally, they also include some considerations regarding the codification of the annotations to SNOMED-CT concept codes.

Guidelines were created de novo in three phases:

- First, an initial version of the guidelines was adapted from clinical annotation guidelines after annotating an initial batch of ~500 tweets and outlining the main problems and difficulties of the social media data.

- Second, a stable version of guidelines was reached while annotating sample sets of the SocialDisNER corpus iteratively until quality control was satisfactory.

- Third, guidelines are iteratively refined as manual annotation continues.

The annotation guidelines are available at Zenodo.